The 7 key technological trends to watch in 2024

From cloud spending to AI, the experts at vshosting share their top technology trends for the year ahead.

In the face of cost-cutting exercises and the push for efficiency, Gartner’s latest 2024 worldwide IT spending forecast has predicted that IT services spending is poised to take up a lion’s share of overall IT budgets. This is prompting a strategic emphasis on leveraging IT services to enhance operational efficiency, against the backdrop of economic uncertainties and spiraling operational costs.

With this in mind, we look at some of the key technological trends we will expect to see this year, and expectations from businesses across the board:

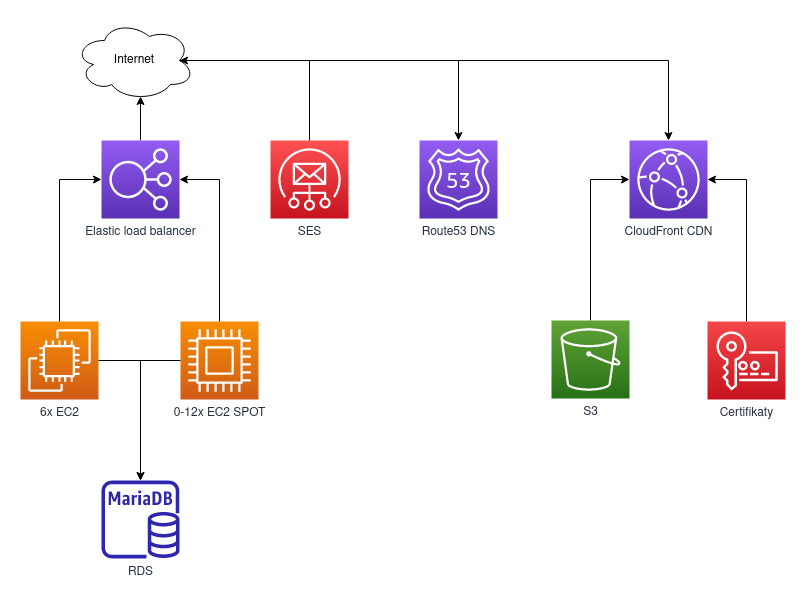

Hosting infrastructures will be expected to adapt and perform

In the aftermath of major events like supply chain disruptions, political events and the AI boom, one key takeaway is that nothing will remain status quo for long. This all points to the need for a flexible, adaptable hosting infrastructure for businesses. Businesses will now look to IT partners who can roll with the punches – and to scale up or down, based on ongoing demand, workloads and needs.

Simply put, there’s a rising demand for hosting that flexes with shifting needs and workloads.

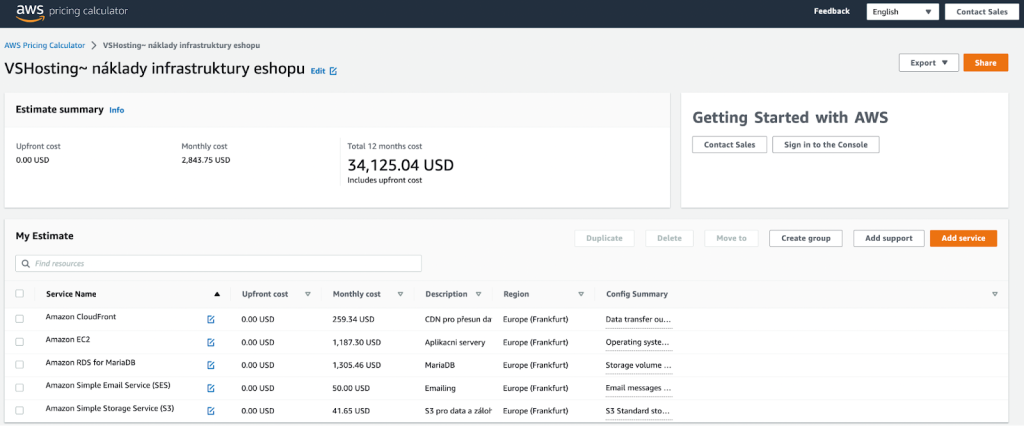

Continuous shift towards cloud optimisation

Businesses migrated to the clouds with the goal to improve performance while streamlining operational costs. But the era of one-size-fits-all cloud solution is shifting, and will continue going into 2024, into a more tailored and dynamic approach. Cloud optimisation strategies, integrating both public and private clouds, would continue to offer the most significant cost saving opportunities.

This year, we can expect to see a greater focus on fighting ‘cloud-flation’, where businesses will look to optimise processes where possible, and adjusting resource scales based on workload requirements, while eliminating underutilised workloads. This would include phasing out generic hosting capabilities, eliminating duplicated workloads and streamlining day-to-day IT storage operations for enhanced efficiencies, among other measures.

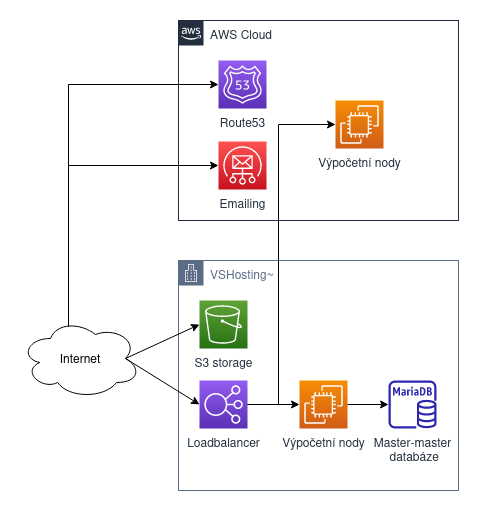

Moving workloads back on prem

As part of the ongoing cloud optimisation exercise, we expect to see more businesses turning to cloud repatriation, or the process of moving data and workload from a public cloud environment back on-prem. According to research by Citrix, a significant number (93%) of IT leaders have already been involved in cloud repatriation project in the last three years, which is unsurprising considering the spiraling cloud costs and the growing need for a scalable approach to cloud infrastructure.

Businesses would also want to explore a safe, and secure way to do so.

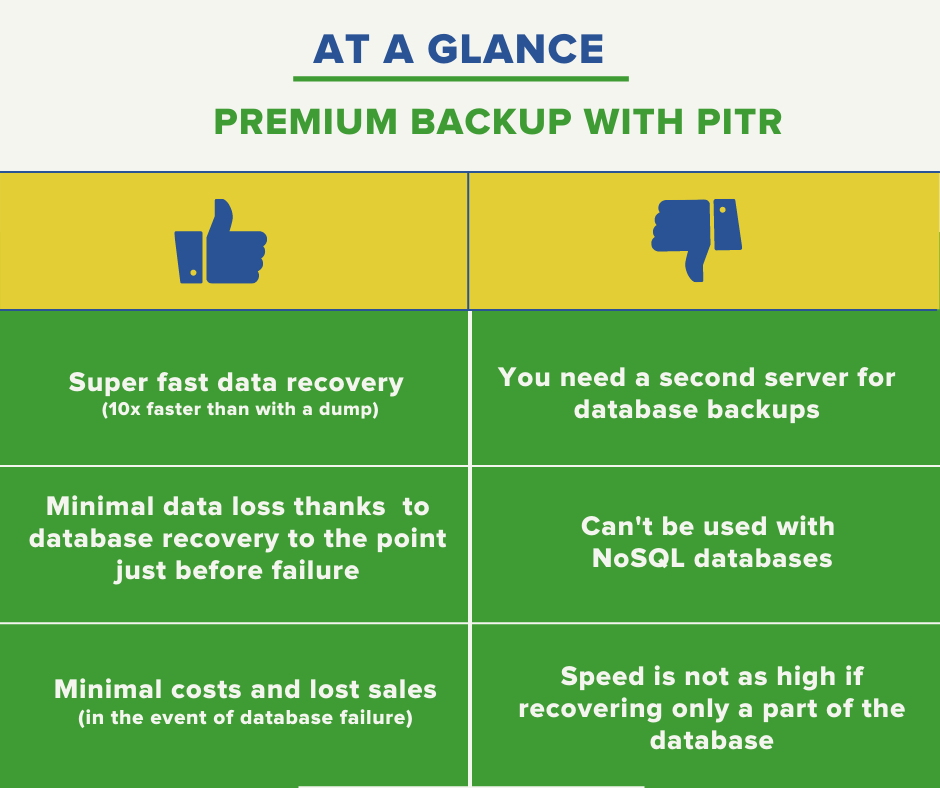

Ransomware resistant backup

In trying to safeguard data while migrating workloads, it’s important to not overlook the data stored in backup systems which form a crucial part of a business’ response and recovery process. Increasingly, threat actors are targeting backup systems and infrastructure, maliciously deleting, or destroying stored data to disrupt operations. Alarmingly, in 75% of these cases, they successfully cripple victims’ ability to recover.

While common practice when dealing with ransomware attacks includes avoiding payment, all backup strategies should adhere to principles that are resilient to destructive actions. This would include measures such as soft-delete practices, blocking any deletion or alteration request once created, introducing delays for these requests, and blocking destructive actions from customers, for example.

Protecting company data is ultimately a multi-layered approach. But as the threat landscape continues to evolve, businesses need to stay proactive and work with their IT service providers to understand how the services they provide can grow their defenses against these evolving risks, including AI-powered DDoS attacks and WormGPT.

Staying green in a hybrid cloud environment

While companies are actively working to optimize their cloud spending, there’s a growing focus on the environmental footprint they leave behind. Traditionally centered on direct emissions, the rising demand to address Scope 3 emissions is exerting pressure on businesses. Shifting away from physical servers and data centers used to be sufficient, but with the demands of hybrid cloud setups, businesses are now turning to greener hosting providers that prioritise energy efficiency.

In the context of regulations and initiatives across Europe, including the Netherlands and Germany, addressing the environmental impact of data centers will continue to shift. As businesses are expected to comply with transparent reporting expectations, working with a responsible hosting provider helps align them with current and future environmental regulations, mitigating potential regulatory risks.

Mainstream adoption of self-hosted large-language models

ChatGPT first propelled AI to mainstream consciousness back when it was first introduced in 2022. Since then, companies of all sizes have tried to harness and integrate generative AI into their business practices to streamline the worker and customer experience. We can expect to see the rise of adoption of self-hosted large-language models within hosting services, which can in turn empower businesses with advanced capabilities for customer engagement, content creation, data analysis, and overall operational efficiency.

Containersation and deploying Kubernetes

Simplifying Kubernetes usage is an important part of a smart cloud strategy. With Kubernetes becoming a go-to for managing containerised applications, it’s more important than ever to manage costs and ensure resources are used effectively. Containers will play a key role in this, contributing to cost optimisation, reducing time-to-market, maximising resource utilisation and lowering infrastructure overhead.

If you’re considering optimising your cloud strategy or are eager to explore how managed hosting can bolster your business needs with a future-ready infrastructure, don’t hesitate to connect with our experts here at vshosting.